- AI & ML

- AI Solutions

The Future of Enterprise AI: Why Small Language Models (SLMs) are the Strategic Choice for Data-Secure Organizations

February 3, 2026

In the race to adopt Generative AI, many enterprises first looked toward the "giants"—Large Language Models (LLMs) like GPT-4 or Gemini. While these models are marvels of general reasoning, a new shift is occurring. Forward-thinking organizations are realizing that when it comes to business-critical tasks and data sovereignty, smaller is often smarter.

At eDelta Corporation, we are seeing a growing demand for Small Language Models (SLMs). These models offer a pathway to AI integration that prioritizes privacy, cost-efficiency, and lightning-fast performance—all within your own controlled environment.

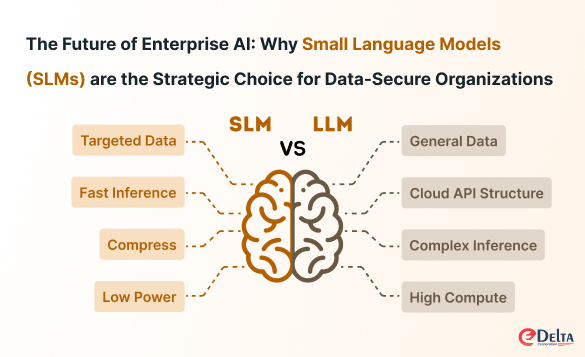

SLM vs. LLM: Understanding the Trade-off

To understand why SLMs are gaining traction, we must look at how they differ from their larger counterparts based on the "Agile & Edge" vs. "Powerful & Cloud" framework.

1. Targeted Intelligence vs. General Knowledge

LLMs are trained on trillions of tokens of general internet data. However, your enterprise doesn't need an AI that knows everything; it needs an AI that knows your business.

- SLMs focus on Targeted/Domain Data.

- By training on smaller, high-quality datasets specific to your industry, they often achieve higher accuracy in niche tasks than a general-purpose model.

2. Local Privacy vs. Cloud Dependency

Data security is the biggest hurdle for AI adoption in regulated sectors. Sending sensitive intellectual property to a cloud-based API can be a major risk.

- SLMs are designed for Edge and Local Deployment.

- Because they are compressed (using techniques like Quantization and Pruning), they can run on your own local servers or mobile GPUs. Your data never leaves your environment.

3. Speed and Throughput

In a production environment, latency is the enemy.

- LLMs often face varying latency (200ms to >1s) because they rely on complex cloud infrastructure and high-end APIs.

- SLMs provide Fast Inference (<50ms) and high throughput. This makes them ideal for real-time applications where instant responses are mandatory.

4. Cost-Efficiency & ROI

Training and running an LLM is a massive financial undertaking involving expensive GPU clusters and high compute costs.

- SLMs are highly Cost-Efficient.

- They can be fine-tuned in hours or days using techniques like LoRA (Low-Rank Adaptation) rather than months. This results in a significantly better ROI for specific enterprise tasks.

Why Enterprises are Focusing on SLMs Now

The strategy for AI has shifted. While LLMs remain powerful for broad knowledge, SLMs are the "Agile" solution for specific, secure business operations.

The Enterprise SLM Advantage:

- Privacy++: Keep proprietary data behind your own firewall.

- Customization: Quick fine-tuning to speak your company’s unique "language."

- Agility: Deploy on existing hardware without massive cloud compute budgets.

How eDelta Corporation Can Help

Transitioning to an AI-driven workflow requires more than just picking a model; it requires modernizing your underlying infrastructure. Whether you are looking to secure your edge deployment or modernize your cloud environment to support localized AI, eDelta Corporation provides the expertise to bridge the gap between complex technology and real business value.

The goal isn't just to have AI—it's to have AI that you own, control, and trust.